AI Video Temporal Structure Analysis for Content & Advertising

Tools: Python, OpenCV, NumPy, Pandas, Scikit-learn, Matplotlib, Optical Flow Motion Analysis, Statistical Analysis, Feature Engineering

Overview

This project explores a key emerging challenge in AI-generated video: while visual realism has improved significantly, many AI-generated videos still exhibit simplified and highly linear temporal structures compared to human-edited video.

As AI video generation becomes increasingly integrated into content production, advertising, and marketing workflows, this raises an important question: Can AI-generated video reproduce the dynamic pacing and rhythm variations that are critical for attention capture, viewer retention, and storytelling effectiveness?

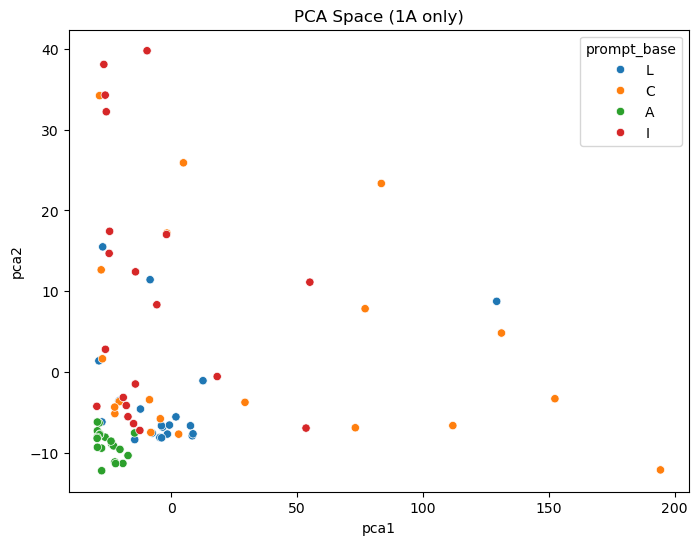

To investigate this, I built a motion-based temporal analysis pipeline to compare AI-generated videos and human-edited videos across rhythm stability, motion variation, and pacing complexity. Using feature engineering and machine learning analysis, this project identifies systematic differences in how AI and human creators structure video over time.

The goal is to better understand how AI-generated temporal patterns may influence viewer engagement, content performance, and how AI video can be more effectively integrated into real-world creative and marketing production workflows.

Approach

Built a video temporal feature extraction pipeline using Optical Flow–based motion signals

Designed motion rhythm features to measure pacing stability, variation, and attention rhythm

Compared AI-generated and human-edited videos across multiple temporal-motion metrics

Applied machine learning classification and statistical testing to validate systematic differences

Analyzed distribution patterns to identify consistent structural tendencies in AI-generated video rhythm

Key Findings

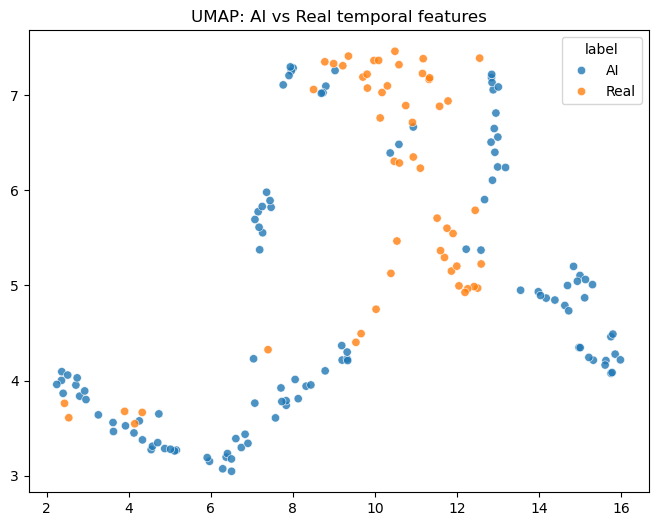

AI-generated videos show a more stable and uniform temporal rhythm: motion intensity changes more smoothly over time, with fewer abrupt shifts in pacing compared to human-edited videos.

Human-edited videos exhibit higher temporal variation and structured pacing: clearer alternation between “build-up / release,” pauses, and speed changes that create attention peaks across the timeline.

AI vs. human videos are distinguishable from temporal-motion features alone: models trained on extracted motion/temporal features can classify AI vs. human-edited videos with ~0.86–0.88 accuracy, indicating systematic differences beyond visual appearance.

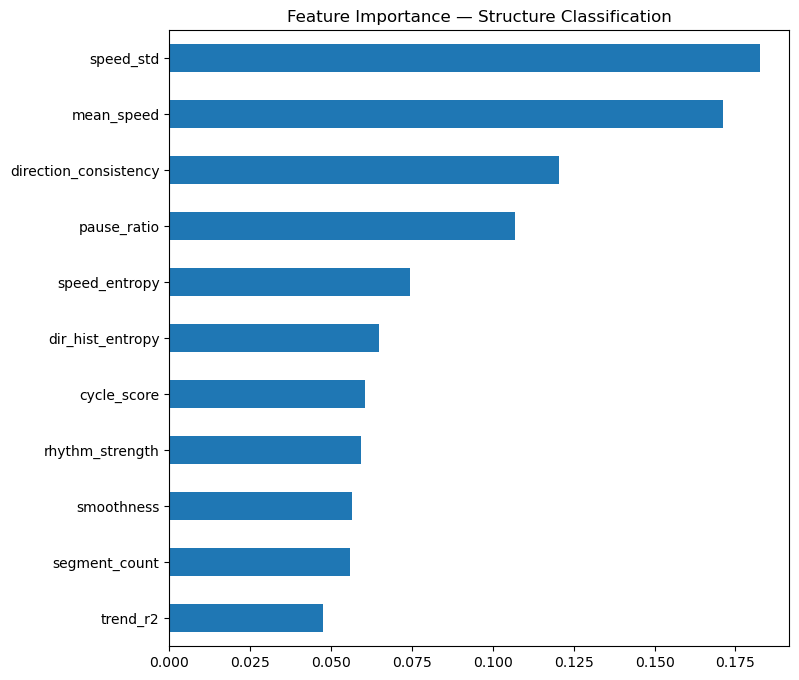

The most discriminative signals relate to motion variability and rhythm complexity: features such as speed variability (speed_std), motion smoothness, speed entropy, direction consistency, pause ratio, and segment count contribute strongly to separation between AI and human-edited videos.

Overall, AI rhythm tends to be “visually rich but temporally conservative”: compared to human edits, AI outputs more often maintain consistent pacing rather than introducing deliberate structural turning points.

Business Impact

Based on the results of this project, I observed that current AI-generated videos can produce visually rich and stable imagery, but often rely on more continuous, smooth, and linear temporal patterns. Compared to human-edited videos, AI-generated videos show fewer clear pacing transitions, tension build-ups, or intentionally designed attention peaks.

From an advertising and marketing perspective, this does not mean AI-generated video is ineffective. Instead, it suggests that AI video may be better suited for scenarios where complex pacing is less critical, but visual engagement and information clarity still matter. Many marketing touchpoints do not require full narrative pacing — simply adding motion can already improve attention retention and information delivery efficiency. In these cases, AI-generated video can significantly reduce production time and cost.

Email marketing and visual email attachments

For example, adding subtle motion (micro-loops, light effects, floating motion, texture shifts) to hero images or product visuals can increase engagement and dwell time without requiring complex editing rhythm.

Social media animated visuals (extension of static key visuals)

Turning a static KV into a 3–5 second atmosphere motion loop can help increase feed attention, support campaign warm-up, and strengthen brand visual identity.

Landing page or in-app motion assets

Such as background motion videos, looping atmosphere animations, or micro-interactions. These improve perceived product quality and visual interest but do not rely on narrative pacing.

Brand atmosphere or world-building visuals

For example, event opening screens, retail displays, exhibition screens, or product launch ambient visuals — where emotional tone and visual consistency matter more than rhythm variation.

Rapid A/B testing creative generation

Quickly generating multiple motion visual directions to test visual style performance, then refining the winning direction through human editing.

Across these scenarios, motion itself often attracts more attention and communicates information more effectively than fully static visuals, even without complex narrative pacing.

Scenarios Where AI Video May Be Used More Directly

(Short motion content / lower pacing complexity)

Scenarios Where Human Post-Editing Is Still Important

(Longer-form video / pacing-driven performance)

For longer marketing video assets (such as 15-second+ ads, story-driven short videos, or performance-driven promotional videos), pacing variation, emotional timing, and structured information sequencing directly influence completion rate and conversion performance.

Based on the “temporal stability tendency” observed in this project, these types of content may benefit from a hybrid workflow:

AI generation provides:

Visual assets

Atmosphere shots

Transition materials

Supporting motion sequences

Human editing provides:

Narrative pacing

Information hierarchy

Attention control points

Emotional timing (pauses, acceleration, contrast, beat alignment)

Summary

Based on these findings, I tend to view AI-generated video as a highly efficient visual content generation tool. It can significantly improve production efficiency for short motion visuals, atmosphere content, and animated extensions of static design.

For longer-form marketing content that relies on complex pacing and storytelling structure, a hybrid workflow — where AI generates visual material and humans refine rhythm and editing — may currently provide a better balance between production efficiency and commercial performance.